This article introduces in detail the 9 misunderstandings that are prone to occur in the process of data center construction and planning, and analyzes the misunderstandings one by one, so as to guide construction personnel to effectively avoid problems and plan more reasonably in the data center.

Many enterprises operate outside of their security capacity, and the space available for expansion is very limited or non-existent. According to statistics, the average service life of a data center is 9 years. However, Gartner data shows that any facility that has been in operation for more than seven years is becoming obsolete.

Outdated data center facilities or overcrowded internal space become "obstacles" to business growth, and building a new data center is sometimes the only solution. When speed to market is the key to success, companies that do not properly assess business needs will lead to a dead end in data center construction, neither guaranteeing availability nor meeting future business needs.

So how can you avoid making mistakes when building and expanding your data center? When designing and building a data center, the approach to implementation is critical. Many times, organizations plan their data centers based solely on power per area, cost per floor area, and tier tiers, but these metrics may not align with their overall business goals and risks. Poor planning leads to poor use of investments and increased operating costs.

Many companies focus on the minutiae and focus too much on "speed and supply", green environmental protection, parallel maintenance, power use effectiveness (PUE) and green building (LEED) certifications. While all of these metrics are important in decision-making, focusing too much on details can lead to a better understanding of the big picture. Many data centers miss out on business opportunities due to data center expansion, so expansion projects should be implemented in a holistic manner.

There are a large number of consulting firms and people involved who can help with planning, but the amount of work to evaluate these advisory proposals and ideas will be enormous. Critical IT capacity in the range of 1-3 MW data centers can easily get into this trouble. Medium-sized data center users also have lower requirements for criticality than large megawatt users. However, the expertise and experience of in-house technicians in implementing expansion may be limited, and the amount of information from multiple parties can lead to confusion and poor decision-making.

Myth 1: Total cost of ownership (TCO) is not taken into account

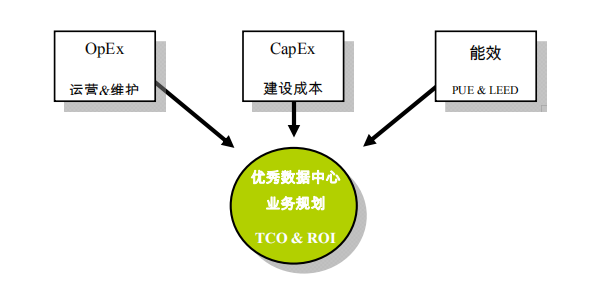

Focusing solely on investment costs is an easy trap to fall into, and the costs required for new construction or expansion can often create an illusion. While Cost of Investment (CapEx) modeling is critical, the overall business planning process is severely undermined if the cost of operations (OpEx) of the data center infrastructure is not accounted for.

Modeling data center operating costs (OpEx) requires two key sub-items: operating costs and maintenance costs. Maintenance costs include all the costs of maintaining all the infrastructure in the data center. This includes maintenance contracts for OEM equipment, data center cleaning expenses, hiring repair and upgrade contractors, and more. Running costs include all costs associated with day-to-day operations and field staff. This includes expenses such as employee salaries, personnel professional and technical training and safety training, data center operation document history, capacity management, and quality monitoring regulations and procedures. Modeling your return on investment (ROI) to make informed decisions is not possible without budgeting for 3-7 years.

When planning a new or expansion of a business-critical data center, the best approach is to focus on the three fundamentals of total cost of ownership (TCO): 1) investment costs (CapEx), 2) operating costs (OpEx), and 3) energy costs. Taking into account any of these items, the model created cannot match the various risks of the enterprise with the various business overheads. When making decisions about procuring equipment and construction, the risks associated with an approach that does not weigh TCO are significant.

Myth 2: Inaccurate construction cost estimation

Another common mistake comes from the estimation itself. The financial budget approved by the board was too small for the new or expanded data center, which led to the failure of the project. The decision process is as follows:

• Provisional approval of funding applications after submission. The finance department should be involved in the investigation and information to create the budget that is closest to reality.

• Spend the necessary time moving forward with the budget decision process.

• The survey found that the original budget proposal was too low.

• The project is delayed; Employees affected; The ability to perform services for external and internal customers is affected; Expectations are affected.

• This ultimately leads to a return to the point where the entire cycle originated, precisely because the first mistake was not avoided, the total cost of ownership (TCO) was not taken into account, and a comprehensive financial model was not established.

The problem of construction cost could have been easily avoided, but if you can't avoid the third mistake, then the second mistake is inevitable.

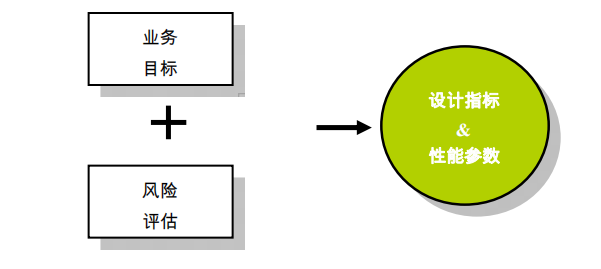

Myth 3: Appropriate design indicators and performance parameters are not formulated

There are two "wrong moves" that may push enterprises into a death spiral of overspending. The first point is that everyone may like a design with availability for Tier 3 or higher, but not everyone really needs it. The second point is that most power densities, kW/m² and kW/cabinet do not match the actual business needs.

In many cases, it is unreasonable to say that the density must be 3 kW/m². Don't over-plan and build, it will only waste money. Data centers with higher availability also have higher operational and energy overhead. If you fall into this misunderstanding, you will take the foundation of creating a business model and ROI analysis off the ground. Start by establishing the right design metrics and performance parameters. Investment costs and operating costs are then structured around these metrics and parameters.

Myth 4: Facility location overrides design indicators

Often, companies begin site selection for facility construction before determining design metrics and performance parameters are in place. Without this critical information, there is no practical point in surveying and evaluating the site. This "cart before the horse" situation often occurs with a data center user base of 1-3 MW. Megawatt data center users are often experts in this field, taking into account the availability and cost of mains power, fiber network access, geographic risks (e.g., earthquakes, typhoons, and flood areas), while basic users often build or renovate data centers in the core areas of their business coverage as shown by their business model.

Problems caused by premature or geography-based location alone make data center location not meet design requirements. For example, it's convenient to deploy a data center in an office building or a few blocks away, but business-critical data centers have a range of site requirements that often require significant investment costs to meet in multi-tenant commercial buildings, and space for future expansion is limited.

Myth 5: Spatial planning overrides design indicators

The physical space and footprint used for security data center infrastructure components can be enormous. In the highest availability system, the ratio between the raised floor area, or the area occupied by the IT room, can be as high as 1:1. Many businesses and organizations plan their space and area requirements based solely on the area occupied by IT equipment, but refrigeration and electrical equipment also take up a lot of space. In addition, many companies do not pay attention to the space occupied by office space. Therefore, it is extremely important to determine the design indicators before moving on to the planning step. Without design metrics, it would be impossible to calculate the overall space and area to meet the overall needs.

Myth 6: Lack of flexibility in design leads to dead ends

The data center industry has made great strides in promoting the importance of modular design. However, using a modular scheme does not guarantee success. The modular approach is based on the idea of adding the required infrastructure equipment in a timely manner only when more capacity is needed, thus protecting the effectiveness of the investment. There are still companies that have led themselves to a dead end by misestimating future demand. Anything can change. A flexible and modular audit approach is key to ensuring long-term benefits. Even the best power density planning becomes obsolete due to consolidation, geometric growth in business due to mergers and acquisitions, or applications of high-density equipment that are not planned. On the electrical side, the design should be reserved for the ability to add UPS capacity online to deployed modules.

Design the inputs and outputs of the power distribution system to meet the needs of future changes. The cost of over-planning a distribution system to meet the needs of future capacity growth does not result in a significant increase in TCO. For mechanical refrigeration, most users can meet their cooling needs with traditional room-level cooling, appropriate raised floor depth, and hot and cold aisle layouts. But once high-density devices are introduced, everything will change. Therefore, it should be ensured that the design core can be implemented online to add row-level or cabinet-level refrigeration solutions.

Myth 7: Misinterpreting the concept of PUE

Utility in Power Use (PUE) is a tool that effectively measures efficiency and drives efficiency gains. But the definition of energy efficiency is not rigorous, which ultimately leads to a misinterpretation of PUE. In almost all new and expanded data centers, achieving a lower PUE value incurs additional investment costs. Many times, companies set a PUE goal out of their own good intentions without considering all the factors that should be taken into account. In fact, it is necessary to fully understand the investment cost and return on investment (ROI) to achieve the set goal. We need to figure out the joint dependencies between total cost of ownership (TCO) and PUE goals.

There are many ways to demonstrate and understand the delicate balance between PUE, ROI, and TCO. Here are three representative examples that need to be alerted:

What should be used as a reference for setting PUE design indicators? Is it a measurement of the "best day", or is it calculated based on the annual average?

Should PUE calculations be based on full or partial load in the data center? The efficiency curve of all devices varies depending on the load factor. In the real running state, the PUE value also changes depending on the time and date.

Finally, the debate about water-cooled chillers and air-cooled chillers has also been ongoing. Each design often results in a more "natural cooling" or "energy-saving cooling mode" application configuration to reduce PUE. For example, when weighing TCO and ROI in decision-making, we should consider the operational requirements of a water-cooled chiller solution in water replenishment and water treatment. It is recognized that a typical 2 MW data center may consume between 190 and 230 tons of water if cooling towers are used.

Effective use of PUE can meet overall business goals. But be careful not to fall into the dilemma of miscalculating investment and operating cost budgets due to misinterpretation of calculation formulas.

Myth 8: Misinterpreting LEED certification

So far, the U.S. Green Building Council (USGBC) has not established a dedicated LEED certification metric for data centers. Instead, it can be certified through commercial building standards. Three basic cognitive errors:

• Lack of basic understanding of qualifying conditions. This can be improved by reading relevant references.

• Generate ideas for additional LEED certifications afterwards. LEED certification should begin at the conceptual design stage, and formal certification is granted when the project is revealed. LEED-certified engineers or consulting firms that can provide this service should be involved early in the planning phase.

• Additional costs associated with obtaining certification. Not accounting for these costs can have an impact on total cost of ownership (TCO) and business decisions.

Myth 9: The design scheme is too complicated

As mentioned earlier, the simpler the design, the better. Even given availability requirements, there are still more than a dozen ways to design an effective system. It is common for redundancy to exacerbate the complexity formation. Even modular systems quickly become complex when different schemes are added. When discussing the solution internally or seeking consultation from the manufacturer, the primary goal is how to keep the design solution simple. The reasons for this are:

• Complexity means more equipment and components, and more components mean more points of failure.

• Human error. The data size varies slightly, but the trend is the same. Most data center downtime is caused by human error. Complex systems increase operational risk.

•Cost. A simple system means less construction costs.

• Operating and maintenance costs. Complexity means more equipment and components, and the operating and maintenance costs required will rise exponentially.

• The design should be based on actual use. Many design schemes look excellent on drawings. It may seem easy to judge and select configurations from drawings and assess availability risks. However, if the design does not take "maintainability" into account, the availability of the system will be at risk and the safety of personnel will be at risk during maintenance.

Summary: Although there are many failures in the construction and expansion of data centers in the past, this does not mean that the next data center project will be the same. By avoiding the top nine myths listed in this article, you'll be able to take steps forward on a path to success. To sum up:

1. Use a total cost of ownership (TCO)-based approach: the overall business expenditure analysis is linked to the risk analysis; Incorporate investment costs (CapEx), operating costs (OpEx), and energy costs into the cost model.

2. Determine design metrics and performance parameters: base design metrics on risk analysis and business goals; The design scheme is determined according to the design indicators, including key grades, site selection, and spatial layout planning.

3. Keep the design scheme simple and flexible: adopt a design scheme that can meet usability requirements, but also ensure low construction and operating costs, simple design is the key; Meet unplanned expansion needs with flexible design options

4. If PUE and LEED certifications are part of the metrics, be well aware of common misconceptions and the cost of implementation. With a total cost of ownership (TCO)-based approach to planning, new data center facilities can be built to meet the performance requirements and business needs of the enterprise now and in the future.